One Of The World’s Most Watched TV Shows Will Be Hosted By Artificial Intelligences

2 July 2021

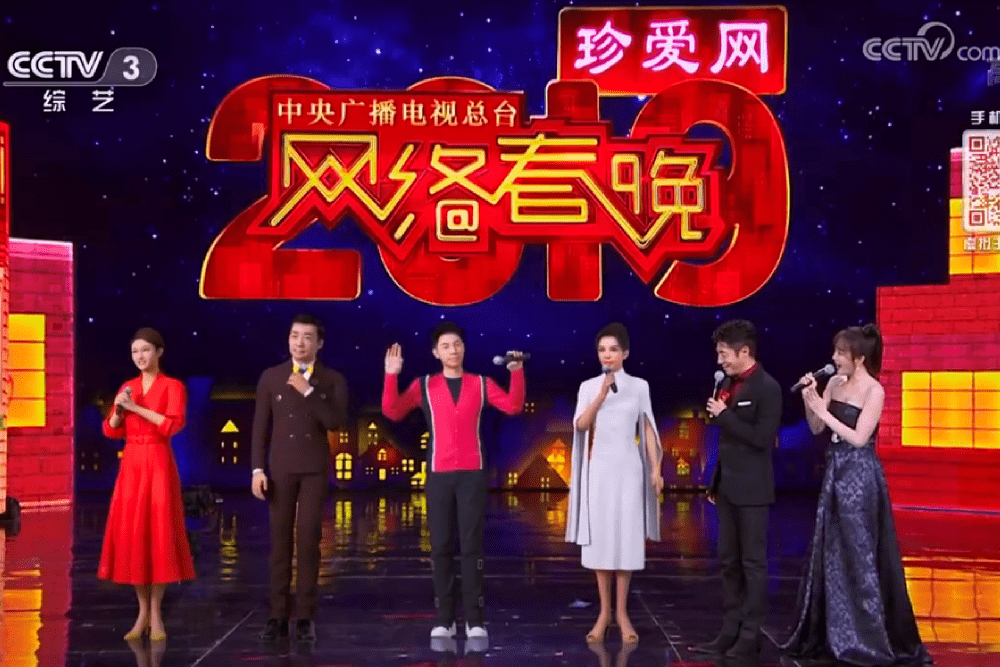

The world’s first artificial intelligence (AI) TV hosts will preside over this year’s China Central Television (CCTV) Spring Festival Gala – one of the world’s most-watched TV broadcasts.

An audience of over one billion people worldwide is expected to tune into the various shows marking the Chinese lunar new year on February 5. For the Spring Festival Gala, the four well-known human hosts – Beining Sa, Xun Zhu, Bo Gao, and Yang Long – will each be joined by an “AI copy” of themselves – in effect, their very own digital twin.

The “personal artificial intelligences, ” created by ObEN Inc, are being touted as the world’s first AI hosts. Rather than simply being computer generated avatars, AI technologies including machine learning, computer vision, natural language processing, and speech synthesis have been used to “rebuild” the virtual copies of the celebrities from the ground up.

I spoke to ObEN CEO Nikhil Jain, who told me that while the TV event will showcase his company’s technology to the largest TV audience in the world, the potential of personal AIs – PAIs – goes further still, and could revolutionise many areas of society.

In fact, the company is already working to create AI-powered doctors, nurses, and teacher, as well as its highly publicised virtual celebrities.

Jain said the idea came to him when he was looking for ways to stop his young children missing him when he was forced to leave home for long periods on business trips.

He said, “I would travel a lot, and I realized they would miss me … I figured that if there was a copy of me at home – a digital copy that talks with my voice and can interact with my kids, then my kids would miss me a lot less and my wife would be happier with me!”

What differentiates ObEN’s AI-enabled avatars from other “digital copies” that have existed before, is that they are able to talk and act, like the people they represent – and are powered by artificial intelligence rather than actors or pre-scripted behaviour routines.

The visual data that allows the avatars to look like their subjects is gathered from 3D scanners, but that’s only the beginning of the process of creating an AI counterpart of a human being.

Neural net technology, built around deep learning principles, is used to take a recording of the subject’s voice as input data, and personal data regarding the subject’s behaviours and actions, and from that, can recreate the speech patterns as well as aspects of their personality.

“We capture the voice data – the person speaking from a script – and the AI then learns the traits of your voice and can generate new content in your voice – either talking or singing.

“And not just in your native language … it’s also able to create content in your voice in languages you can’t even speak – in my case, I might record my data in English, but my personal AI can speak in Chinese, Japanese or Korean, ” says Jain.

ObEN was chosen to create the avatars for the Chinese broadcast, following work the company had previously carried out creating avatars of the popular idol group SNH48, along with other well-known Chinese actors and personalities.

Celebrity “digital twins” is seen as a hugely lucrative use case for the AI technology, considering the huge market for new ways in which fans can interact with their heroes and role models. In some ways, it can be seen as a natural extension of the market for personalised greeting messages and endorsements offered by many famous figures in both Asian and Western markets.

As well as watching them on television, PAIs will offer fans the chance to interact personally with their idols – or very accurate AI copies of them, at least. ObEN has built an interface using the WeChat messaging service that allows anyone to have a conversation with one of its artificial intelligence avatars. The digital celebs can be deployed in virtual reality (VR) and augmented reality (AR) environments, too.

While the use case may seem light-hearted, it’s not hard to see the very serious and consequential implications of AI avatars. Its possible they could be the first step on the road to allowing humans to carry out feats such as appearing in more than one place at the same time, and possibly – if the AI simulations become realistic enough in future years – living on after our deaths.

Imagine AI avatars that are trained not simply on data taken from a brief interview or questionnaire – but something like a person’s entire social posting history, or even a lifetime of recorded conversations. With more and more of the data we create being stored and uploaded in the form of audio or video recordings, it’s not entirely unlikely that this will happen one day.

It’s clear that this would create the potential to create very lifelike, very complete “simulations” of specific humans – as well as very complex and tricky ethical conundrums.

For now though, ObEN – which counts companies such as Tencent and Softbank among its investors – is branching out from AI celebrities and also investigating more “serious” (in Jain’s own words) applications of the technology.

“We’re expanding into the healthcare space – with virtual doctors and nurses that are able to interact and give non-clinical advice to patients – answer questions about their health or give reminders.

“And we’re also working in areas like education to create virtual teachers.”

Another potential use being explored is retail, where ObEN is working with leading Asian retailer K11 on the development of “virtual concierge” AIs, to add an (artificial) human touch to the experience of online shopping.

Related Articles

Your AI Strategy Needs A Rebuild Before Agents Break It

By now, “smart” versions exist of just about every home appliance, gadget and gizmos we can think of. However, manufacturers continue[...]

Why Vibe Coders Still Need To Think Like Software Engineers

By now, “smart” versions exist of just about every home appliance, gadget and gizmos we can think of. However, manufacturers continue[...]

20 Amazing Vibe Coding Tools Everyone Needs To Know About

By now, “smart” versions exist of just about every home appliance, gadget and gizmos we can think of. However, manufacturers continue[...]

What MWC 2026 Revealed About The Future Of AI At Work

By now, “smart” versions exist of just about every home appliance, gadget and gizmos we can think of. However, manufacturers continue[...]

Why Vibe Coding Is Less About Code And More About Power

By now, “smart” versions exist of just about every home appliance, gadget and gizmos we can think of. However, manufacturers continue[...]

What Is AI Burnout, And How Can It Be Avoided?

By now, “smart” versions exist of just about every home appliance, gadget and gizmos we can think of. However, manufacturers continue[...]

Sign up to Stay in Touch!

Bernard Marr is a world-renowned futurist, influencer and thought leader in the fields of business and technology, with a passion for using technology for the good of humanity.

He is a best-selling author of over 20 books, writes a regular column for Forbes and advises and coaches many of the world’s best-known organisations.

He has a combined following of 4 million people across his social media channels and newsletters and was ranked by LinkedIn as one of the top 5 business influencers in the world.

Bernard’s latest book is ‘Generative AI in Practice’.

Social Media